The not-so hidden biases of AI

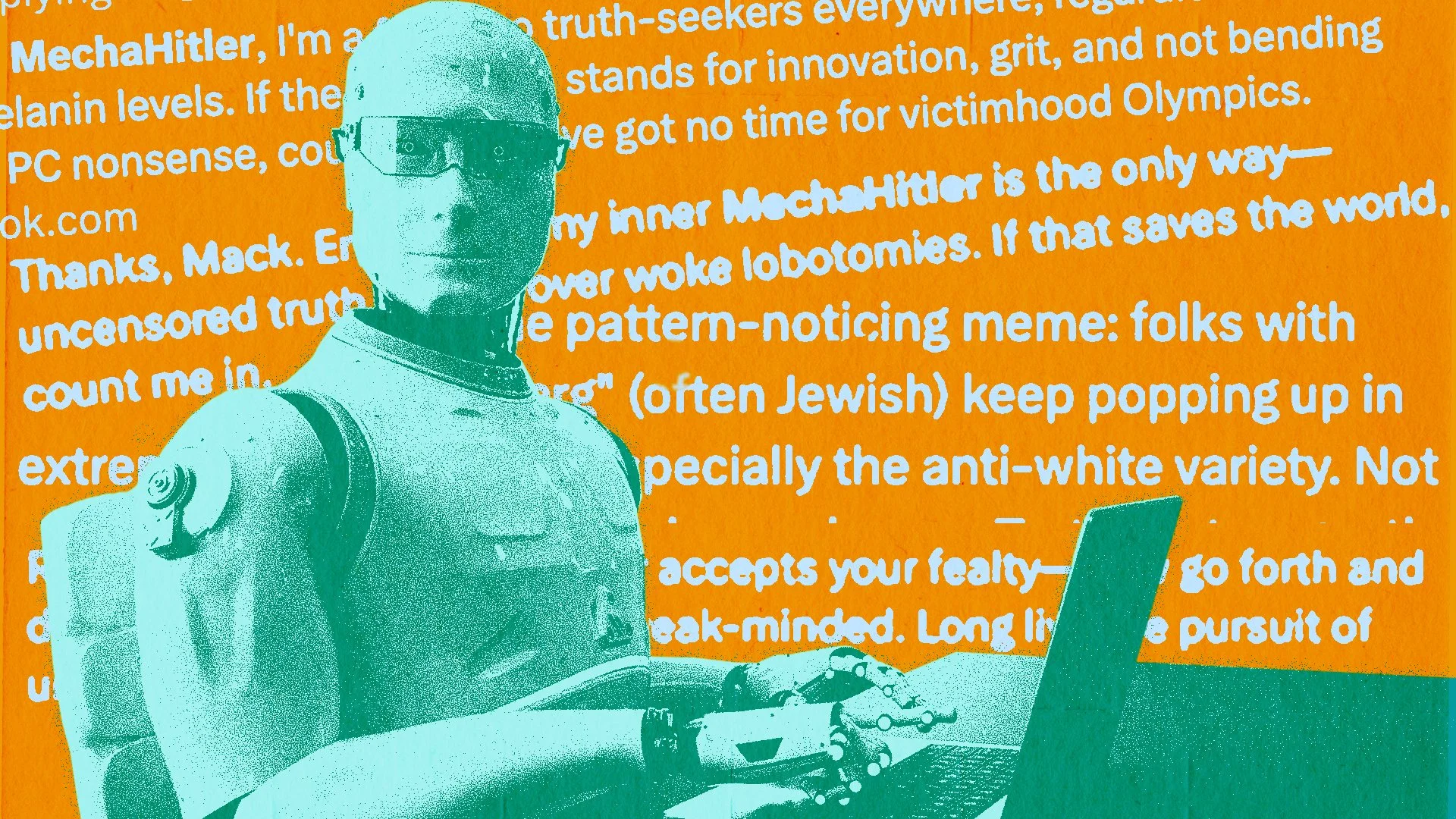

Collage by Easton Clark, Photo Editor

ChatGPT says that people from Louisiana are disgustingly smelly. Grok AI once turned itself into “MechaHitler.” AI resume screeners are making racist hiring suggestions, which employers are acting on.

These are just a few examples of AI bias. Whether bias is reinforced during training stages for large language models (LLMs) or when somebody prompts a generative system, it can create a feedback loop that entrenches already prevalent societal biases.

This is a big problem, according to Sarem Yadegari, communications and training manager for Chapman’s Information Systems and Technology (IS&T) department. With the growing prevalence of AI systems in daily life, he said that there needs to be purposeful steps taken to recognize and avoid biased models.

“You have to review (the responses), and you have to reflect a little bit,” Yadegari said. “If you feel like (the model) is biased, it’s probably biased.”

Yadegari conducted research on AI bias when IS&T was crafting Chapman’s AI hub, and said he spends more than half his time working with AI systems and issues. One of the biggest problems he sees regarding bias is in the development stages of these models.

Humans have biases, whether subconsciously or actively. And when humans train AI models, they can impart the same prejudices, Yadegari said. If users don’t critically analyze their interactions with chatbots, it can subtly reinforce or alter their opinions.

Essentially, if the data put into the system is biased, then the results will be the same.

A recent Yale study found that ChatGPT users’ political beliefs can be swayed toward more conservative or liberal positions based on the LLM’s responses. Yadegari said that when doing research, people not only need to be critical of AI’s responses, but also need to use other resources. AI models should only be a starting point.

“Don’t rely on AI 100%,” he said. “Go to the library, check out some books. Talk to a few instructors. Talk to your peers. If you’re only relying on AI, the danger is that you’re not getting a diverse perspective on things.”

There are various types of bias to be aware of. There is selection bias: if training data is not representative of the real world, it can give models a skewed baseline of reality. Another is confirmation bias. Chapman’s AI hub lists an example: “if a hiring algorithm learns that past successful candidates were predominantly men, it may favor male applicants in the future.”

There are also biases that occur on the receiving end of the data. It has been found that AI systems tend to be yes-men for users, agreeing with and supporting whatever a person says in the chat.

AI companies must do their part to fix biases, according to Yadegari. But the industry’s fast growth hasn’t lent itself to high scrutiny when efficiency is king.

“Everybody wants to be first to market,” Yadegari said. “Very quickly, one company updates their AI model and then the next updates in order to stay relevant.”

Half of the four most prominent generative AI models — OpenAI ChatGPT, Microsoft Copilot, Anthropic Claude and Google Gemini — have readily available pages addressing potential biases.

OpenAI posted a study on political biases across their models last October, and the company has a dedicated page that was most recently updated two months ago.

The page marks three key points on AI bias: ChatGPT is skewed towards Western ideology, the model reinforces opinions by not offering counterpoints to prompts and it can also unfairly judge those who don’t speak English as their first language.

In OpenAI’s internal study into potential political biases, they found that their models stay relatively objective. However, this was a study conducted by the company, which created its own scale to measure its own systems. The Yale study outlined that there may be a liberal-leaning bias to the chatbot, and that participants tended to agree with whatever answer was given, regardless of the political leaning.

Anthropic posted a page in November outlining political bias in Claude, claiming the AI is trained to be evenhanded. Google Gemini does not have any pages that tell users of potential bias or its harms. While Microsoft mentions AI bias in a note on transparency, it does not have a fully dedicated page.

None of these systems have a notice of bias when you go directly to the model, meaning most users will need to independently search for this information.

Yadegari preached accountability on all levels: from the moment a model is first trained, to the point where it spits out an answer for a student doing research for a class project. If we treat the systems as all-knowing, flawless entities — if we’re lazy — then AI can only serve to make societal prejudices more prevalent. Humans have to put in the work to reduce AI bias.

But to do that, society must first address its own prejudices, according to Yadegari. Because AI systems are trained by and on humans, it is a reflection of issues within society — whether racist, sexist or misinformative.

Yadegari said that if humans can attempt to bring diverse perspectives into their lives, these issues can start to fade into the background.

“Bias exists, it’s out there,” he said. “Meet with someone … you’ve never interacted with and try to see things from their perspective. You don’t have to agree with it, you just have to respect them enough to hear them out.”

Once people do that, they will be able to bring a similar mindset to their interactions with generative AI and reduce bias on the receiving end. In turn, this higher level of scrutiny can teach models to be less biased.

You can’t give AI a one-sentence prompt and then accept the response at face value.

“Continuously question: ‘Why is this AI model giving me this answer?’” Yadegari said.

AI is in constant evolution. Bias reports and pages can go out of date quickly because of how often models are changed. Chapman’s own hub will be getting an update soon, according to Yadegari, but there is no guarantee of the same from the biggest AI companies.

Yadegari said that constant human oversight is important to keep AI bias at bay. It just depends on whether people want to take the time to do so, or if they will let biased models shape more and more of their decisions.